Publikationen

Ausgewählte Publikationen

Hier finden Sie ausgewählte Publikationen aus den letzten Jahren. Eine ausführliche Liste der Publikationen finden Sie auf der Google Scholar oder DBLP Seite von Stefan Schneegaß.

- Keppel, Jonas; Strauss, Marvin; Haliburton, Luke; Weingärtner, Henrike; Dominiak, Julia; Faltaous, Sarah; Gruenefeld, Uwe; Mayer, Sven; Woźniak, Paweł W.; Schneegass, Stefan: Situated Artifacts Amplify Engagement in Physical Activity. In: Proceedings of the 2025 ACM Designing Interactive Systems Conference, Jg.2025 (2025). doi:10.1145/3715336.3735690Kurzfassung Details VolltextBIB Download

In the context of rising sedentary lifestyles, this paper investigates the efficacy of “Situated Artifacts” in promoting physical activity. We designed two artifacts that display users’ physical activity data within their homes – one physical and one digital. We conducted a 9-week, counterbalanced, within-subject field study with N = 24 participants to assess the impact of these artifacts on physical activity, reflection, and motivation. We collected quantitative data on physical activity and administered daily and weekly questionnaires, employing individual Likert items and standardized instruments, as well as conducted interviews post-prototype usage. Our findings indicate that while both artifacts act as reminders for physical activity, the physical artifact was superior in terms of user engagement. The study revealed that this can be attributed to the higher perceived presence and, thereby, enhanced social interaction, which acts as a motivational source for activity. In this sense, situated artifacts gently nudge toward sustainable health behavior change.

- Keppel, Jonas; Strauss, Marvin; Zhang, Shuoheng; Stroehnisch, Markus; Lewin, Stefan; Gruenefeld, Uwe; Degraen, Donald; Goedicke, David; Matviienko, Andrii; Schneegass, Stefan: The Impact of Bike-Based Controllers and Adaptive Feedback on Immersion and Enjoyment in a Virtual Reality Cycling Exergame. In: Proceedings of the Extended Abstracts of the CHI Conference on Human Factors in Computing Systems. Association for Computing Machinery, New York, NY, USA, 2025. doi:10.1145/3706599.3720096Kurzfassung Details BIB Download

Cycling exergames can increase enjoyment and promote high energy expenditure, making exercise more engaging and, therefore, supporting healthier lifestyles. To improve player experience in a virtual reality cycling exergame using a stationary bike, we investigated how different input and output techniques affect player engagement. We implemented a bike-based controller integrating button and shoulder-lean steering as input, combined with or without adaptive changes in bike inclination and resistance as output. The results of our study with 24 participants indicate that adaptive modes increase effort and perceived exertion. While button steering provides better pragmatic quality, shoulder-lean steering offers a more hedonic experience but requires more skill and effort. Still, this greater enjoyment fosters higher engagement, particularly when players enter a flow state where the increased physical demands become less noticeable. These findings underscore the potential of bike-based adaptive controllers to maximize player engagement and enhance VR cycling exergame experiences.

- Wald, Iddo Yehoshua; Degraen, Donald; Maimon, Amber; Keppel, Jonas; Schneegass, Stefan; Malaka, Rainer: Demonstrating Spatial Haptics: A Sensory Substitution Method for Distal Object Detection Using Tactile Cues. In: Proceedings of the Extended Abstracts of the CHI Conference on Human Factors in Computing Systems. Association for Computing Machinery, New York, NY, USA, 2025. doi:10.1145/3706599.3721281Kurzfassung Details BIB Download

We present Spatial Haptics, a sensory substitution method for representing locations of remote objects in 3D space via haptics. Spatial Haptics imitates auditory localization processes to enable vibrotactile localization abilities similar to those of some animal species. Two implementations of the localization method were developed, that modulate the vibration amplitude of the controllers relative to a target object in Virtual Reality. In Ear-Based Localization, vibrations are modulated based on the relative locations of the ears to the target, while in Hand-Based Localization, the amplitude is determined based on the relative locations of the hands to the target. In this interactive demonstration, users can experience the vibrotactile localization approaches in an interactive VR mini-game. Their task is to locate the target object in a scene consisting of multiple moving objects. By experiencing spatial localization using haptics hands-on, participants can evaluate the benefits of this sensory substitution approach for detecting distal objects.

- Wald, Iddo Yehoshua; Degraen⁎, Donald; Maimon⁎, Amber; Keppel, Jonas; Schneegass, Stefan; Malaka, Rainer: Spatial Haptics: A Sensory Substitution Method for Distal Object Detection Using Tactile Cues. In: Proceedings of the 2025 CHI Conference on Human Factors in Computing Systems. Association for Computing Machinery, New York, NY, USA, 2025. doi:10.1145/3706598.3714083Kurzfassung Details BIB Download

We present a sensory substitution-based method for representing locations of remote objects in 3D space via haptics. By imitating auditory localization processes, we enable vibrotactile localization abilities similar to those of some spiders, elephants, and other species. We evaluated this concept in virtual reality by modulating the vibration amplitude of two controllers depending on relative locations to a target. We developed two implementations applying this method using either ear or hand locations. A proof-of-concept study assessed localization performance and user experience, achieving under 30° differentiation between horizontal targets with no prior training. This unique approach enables localization by using only two actuators, requires low computational power, and could potentially assist users in gaining spatial awareness in challenging environments. We compare the implementations and discuss the use of hands as ears in motion, a novel technique not previously explored in the sensory substitution literature.

- Keppel, Jonas; Strauss, Marvin; Gruenefeld, Uwe; Schneegass, Stefan: Magic Mirror: Designing a Weight Change Visualization for Domestic Use. In: Proc. ACM Hum.-Comput. Interact., Jg.8 (2024). doi:10.1145/3698149Kurzfassung Details BIB Download

Virtual mirrors displaying weight changes can support users in forming healthier habits by visualizing potential future body shapes. However, these often come with privacy, feasibility, and cost limitations. This paper introduces the Magic Mirror, a novel distortion-based mirror that leverages curvature effects to alter the appearance of body size while preserving privacy. We constructed the Magic Mirror and compared it to a video-based alternative. In an online study (N=115), we determined the optimal parameters for each system, comparing weight change visualizations and manipulation levels. Afterward, we conducted a laboratory study (N=24) to compare the two systems in terms of user perception, motivational potential, and willingness to use daily. Our findings indicate that the Magic Mirror surpasses the video-based mirror in terms of suitability for residential application, as it addresses feasibility concerns commonly associated with virtual mirrors. Our work demonstrates that mirrors that display weight changes can be implemented in users’ homes without any cameras, ensuring privacy.

- Kassem, Khaled; Saad, Alia; Pascher, Max; Schett, Martin; Michahelles, Florian: Push Me: Evaluating Usability and User Experience in Nudge-based Human-Robot Interaction through Embedded Force and Torque Sensors. In: Proceedings of Mensch Und Computer 2024. Association for Computing Machinery, New York, NY, USA, 2024, S. 399-407. doi:10.1145/3670653.3677487KurzfassungPDF Details VolltextBIB Download

Robots are expected to be integrated into human workspaces, which makes the development of effective and intuitive interaction crucial. While vision- and speech-based robot interfaces have been well studied, direct physical interaction has been less explored. However, HCI research has shown that direct manipulation interfaces provide more intuitive and satisfying user experiences, compared to other interaction modes. This work examines how built-in force/torque sensors in robots can facilitate direct manipulation through nudge-based interactions. We conducted a user study (N = 23) to compare this haptic approach with traditional touchscreen interfaces, focusing on workload, user experience, and usability. Our results show that haptic interactions are more engaging and intuitive but also more physically demanding compared to touchscreen interaction. These findings have implications for the design of physical human-robot interaction interfaces. Given the benefits of physical interaction highlighted in our study, we recommend that designers incorporate this interaction method for human-robot interaction, especially at close quarters.

- Goldau, Felix Ferdinand; Pascher, Max; Baumeister, Annalies; Tolle, Patrizia; Gerken, Jens; Frese, Udo: Adaptive Control in Assistive Application - A Study Evaluating Shared Control by Users with Limited Upper Limb Mobility. In: RO-MAN 2024: 33rd IEEE International Conference on Robot and Human Interactive Communication,. IEEE, Pasadena, California, US, 2024. doi:10.48550/arXiv.2406.06103KurzfassungPDF Details BIB Download

Shared control in assistive robotics blends human autonomy with computer assistance, thus simplifying complex tasks for individuals with physical impairments. This study assesses an adaptive Degrees of Freedom control method specifically tailored for individuals with upper limb impairments. It employs a between-subjects analysis with 24 participants, conducting 81 trials across three distinct input devices in a realistic everyday-task setting. Given the diverse capabilities of the vulnerable target demographic and the known challenges in statistical comparisons due to individual differences, the study focuses primarily on subjective qualitative data. The results reveal consistently high success rates in trial completions, irrespective of the input device used. Participants appreciated their involvement in the research process, displayed a positive outlook, and quick adaptability to the control system. Notably, each participant effectively managed the given task within a short time frame.

- Pascher, Max: An Interaction Design for AI-enhanced Assistive Human-Robot Collaboration (Dissertation). 2024. doi:10.17185/duepublico/82229Kurzfassung Details VolltextBIB Download

The global population of individuals with motor impairments faces substantial challenges, including reduced mobility, social exclusion, and increased caregiver dependency. While advances in assistive technologies can augment human capabilities, independence, and overall well-being by alleviating caregiver fatigue and care receiver weariness, target user involvement regarding their needs and lived experiences in the ideation, development, and evaluation process is often neglected. Further, current interaction design concepts often prove unsatisfactory, posing challenges to user autonomy and system usability, hence resulting in additional stress for end users. Here, the advantages of Artificial Intelligence (AI) can enhance accessibility of assistive technology. As such, a notable research gap exists in the development and evaluation of interaction design concepts for AI-enhanced assistive robotics.

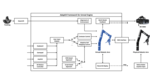

This thesis addresses the gap by streamlining the development and evaluation of shared control approaches while enhancing user integration through three key contributions. Firstly, it identifies user needs for assistive technologies and explores concepts related to robot motion intent communication. Secondly, it introduces the innovative shared control approach Adaptive DoF Mapping Control (ADMC), which generates mappings of a robot’s Degrees-of-Freedom (DoFs) based on situational Human-Robot Interaction (HRI) tasks and suggests them to users. Thirdly, it presents and evaluates the Extended Reality (XR) framework AdaptiX for in-silico development and evaluation of multi-modal interaction designs and feedback methods for shared control applications.

In contrast to existing goal-oriented shared control approaches, my work highlights the development of a novel concept that does not rely on computing trajectories for known movement goals. Instead of pre-determined goals, ADMC utilises its inherent rule engine – for example, a Convolutional Neural Network (CNN), the robot arm’s posture, and a colour-and-depth camera feed of the robot’s gripper surroundings. This approach facilitates a more flexible and situationally aware shared control system.

The evaluations within this thesis demonstrate that the ADMC approach significantly reduces task completion time, average number of necessary switches between DoF mappings, and perceived workload of users, compared to a non-adaptive input method utilising cardinal DoFs. Further, the effectiveness of AdaptiX for evaluations in-silico as well as real-world scenarios has been shown in one remote and two laboratory user studies.

The thesis emphasises the transformative impact of assistive technologies for individuals with motor impairments, stressing the importance of user-centred design and legible AI-enhanced shared control applications, as well as the benefits of in-silico testing. Further, it also outlines future research opportunities with a focus on refining communication methods, extending the application of approaches like ADMC, and enhancing tools like AdaptiX to accommodate diverse tasks and scenarios. Addressing these challenges can further advance AI-enhanced assistive robotics, promoting the full inclusion of individuals with physical impairments in social and professional spheres.

- Pascher, Max; Goldau, Felix Ferdinand; Kronhardt, Kirill; Frese, Udo; Gerken, Jens: AdaptiX – A Transitional XR Framework for Development and Evaluation of Shared Control Applications in Assistive Robotics. In: Proc. ACM Hum.-Comput. Interact., Jg.8 (2024), Nr. EICS, S. 1-28. doi:10.1145/3660243KurzfassungPDF Details VolltextBIB Download

With the ongoing efforts to empower people with mobility impairments and the increase in technological acceptance by the general public, assistive technologies, such as collaborative robotic arms, are gaining popularity. Yet, their widespread success is limited by usability issues, specifically the disparity between user input and software control along the autonomy continuum. To address this, shared control concepts provide opportunities to combine the targeted increase of user autonomy with a certain level of computer assistance. This paper presents the free and open-source AdaptiX XR framework for developing and evaluating shared control applications in a high-resolution simulation environment. The initial framework consists of a simulated robotic arm with an example scenario in Virtual Reality (VR), multiple standard control interfaces, and a specialized recording/replay system. AdaptiX can easily be extended for specific research needs, allowing Human-Robot Interaction (HRI) researchers to rapidly design and test novel interaction methods, intervention strategies, and multi-modal feedback techniques, without requiring an actual physical robotic arm during the early phases of ideation, prototyping, and evaluation. Also, a Robot Operating System (ROS) integration enables the controlling of a real robotic arm in a PhysicalTwin approach without any simulation-reality gap. Here, we review the capabilities and limitations of AdaptiX in detail and present three bodies of research based on the framework. AdaptiX can be accessed at https://adaptix.robot-research.de.

-----

This research received the Best Paper Award - Liebers, Jonathan; Laskowski, Patrick; Rademaker, Florian; Sabel, Leon; Hoppen, Jordan; Gruenefeld, Uwe; Schneegass, Stefan: Kinetic Signatures: A Systematic Investigation of Movement-Based User Identification in Virtual Reality. In: Proceedings of the CHI Conference on Human Factors in Computing Systems (CHI ’24). Association for Computing Machinery, New York, NY, USA, 2024. doi:10.1145/3613904.3642471KurzfassungPDF Details BIB Download

Behavioral Biometrics in Virtual Reality (VR) enable implicit user identification by leveraging the motion data of users’ heads and hands from their interactions in VR. This spatiotemporal data forms a Kinetic Signature, which is a user-dependent behavioral biometric trait. Although kinetic signatures have been widely used in recent research, the factors contributing to their degree of identifiability remain mostly unexplored. Drawing from existing literature, this work systematically examines the influence of static and dynamic components in human motion. We conducted a user study (N = 24) with two sessions to reidentify users across different VR sports and exercises after one week. We found that the identifiability of a kinetic signature depends on its inherent static and dynamic factors, with the best combination allowing for 90.91% identification accuracy after one week had passed. Therefore, this work lays a foundation for designing and refining movement-based identification protocols in immersive environments.

- Keppel, Jonas; Schneegass, Stefan: Buying vs. Building: Can Money Fix Everything When Prototyping in CyclingHCI for Sports?. CHI 2024, Honolulu, Hawai'i, 2024. KurzfassungPDF Details VolltextBIB Download

This position paper explores the dichotomy of building versus buying in the domain of Cycling Human-Computer Interaction (CyclingHCI) for sports applications. Our research focuses on indoor cycling to enhance physical activity through sports motivation. Recognizing that the social component is crucial for many users to sustain regular sports engagement, we investigate the integration of social elements within indoor cycling topics. We outline two approaches: First, we utilize Virtual Reality (VR) Exergames using a bike-based controller as input and actuator aiming for immersive experiences and, therefore, buying a commercial smartbike, the Wahoo KickR Bike. Second, we enhance traditional spinning classes with interactive technology to foster social components during courses, building self-made sensors for attachment to mechanical indoor bikes. Finally, we discuss the challenges and benefits of constructing custom sensors versus purchasing commercial bike trainers. Our lessons learned contribute to informed decision-making in CyclingHCI prototyping, balancing innovation with practicality.

- Lakhnati, Younes; Pascher, Max; Gerken, Jens: Exploring a GPT-based large language model for variable autonomy in a VR-based human-robot teaming simulation. In: Frontiers in Robotics and AI, Jg.2024 (2024), Nr. 11. doi:10.3389/frobt.2024.1347538KurzfassungPDF Details VolltextBIB Download

In a rapidly evolving digital landscape autonomous tools and robots are becoming commonplace. Recognizing the significance of this development, this paper explores the integration of Large Language Models (LLMs) like Generative pre-trained transformer (GPT) into human-robot teaming environments to facilitate variable autonomy through the means of verbal human-robot communication. In this paper, we introduce a novel simulation framework for such a GPT-powered multi-robot testbed environment, based on a Unity Virtual Reality (VR) setting. This system allows users to interact with simulated robot agents through natural language, each powered by individual GPT cores. By means of OpenAI’s function calling, we bridge the gap between unstructured natural language input and structured robot actions. A user study with 12 participants explores the effectiveness of GPT-4 and, more importantly, user strategies when being given the opportunity to converse in natural language within a simulated multi-robot environment. Our findings suggest that users may have preconceived expectations on how to converse with robots and seldom try to explore the actual language and cognitive capabilities of their simulated robot collaborators. Still, those users who did explore were able to benefit from a much more natural flow of communication and human-like back-and-forth. We provide a set of lessons learned for future research and technical implementations of similar systems.

- Nanavati, Amal; Pascher, Max; Ranganeni, Vinitha; Gordon, Ethan K.; Faulkner, Taylor Kessler; Srinivasa, Siddhartha S.; Cakmak, Maya; Alves-Oliveira, Patrícia; Gerken, Jens: Multiple Ways of Working with Users to Develop Physically Assistive Robots. In: A3DE '24: Workshop on Assistive Applications, Accessibility, and Disability Ethics at the ACM/IEEE International Conference on Human-Robot Interaction. 2024. doi:10.48550/arXiv.2403.00489KurzfassungPDF Details BIB Download

Despite the growth of physically assistive robotics (PAR) research over the last decade, nearly half of PAR user studies do not involve participants with the target disabilities. There are several reasons for this---recruitment challenges, small sample sizes, and transportation logistics---all influenced by systemic barriers that people with disabilities face. However, it is well-established that working with end-users results in technology that better addresses their needs and integrates with their lived circumstances. In this paper, we reflect on multiple approaches we have taken to working with people with motor impairments across the design, development, and evaluation of three PAR projects: (a) assistive feeding with a robot arm; (b) assistive teleoperation with a mobile manipulator; and (c) shared control with a robot arm. We discuss these approaches to working with users along three dimensions---individual- vs. community-level insight, logistic burden on end-users vs. researchers, and benefit to researchers vs. community---and share recommendations for how other PAR researchers can incorporate users into their work.

- Wozniak, Maciej K.; Pascher, Max; Ikeda, Bryce; Luebbers, Matthew B.; Jena, Ayesha: Virtual, Augmented, and Mixed Reality for Human-Robot Interaction (VAM-HRI). In: Companion of the 2024 ACM/IEEE International Conference on Human-Robot Interaction (HRI '24 Companion), March 11--14, 2024, Boulder, CO, USA. ACM, Boulder, Colorado, USA, 2024. doi:10.1145/3610978.3638158KurzfassungPDF Details BIB Download

The 7th International Workshop on Virtual, Augmented, and Mixed Reality for Human-Robot Interaction (VAM-HRI) seeks to bring together researchers from human-robot interaction (HRI), robotics, and mixed reality (MR) to address the challenges related to mixed reality interactions between humans and robots. Key topics include the development of robots capable of interacting with humans in mixed reality, the use of virtual reality for creating interactive robots, designing augmented reality interfaces for communication between humans and robots, exploring mixed reality interfaces for enhancing robot learning, comparative analysis of the capabilities and perceptions of robots and virtual agents, and sharing best design practices. VAM-HRI 2024 will build on the success of VAM-HRI workshops held from 2018 to 2023, advancing research in this specialized community. The prior year’s website is located at vam-hri.github.io.

- Pascher, Max; Saad, Alia; Liebers, Jonathan; Heger, Roman; Gerken, Jens; Schneegass, Stefan; Gruenefeld, Uwe: Hands-On Robotics: Enabling Communication Through Direct Gesture Control. In: Companion of the 2024 ACM/IEEE International Conference on Human-Robot Interaction (HRI '24 Companion), March 11--14, 2024, Boulder, CO, USA. ACM, Boulder, Colorado, USA, 2024. doi:10.1145/3610978.3640635KurzfassungPDF Details BIB Download

Effective Human-Robot Interaction (HRI) is fundamental to seamlessly integrating robotic systems into our daily lives. However, current communication modes require additional technological interfaces, which can be cumbersome and indirect. This paper presents a novel approach, using direct motion-based communication by moving a robot's end effector. Our strategy enables users to communicate with a robot by using four distinct gestures -- two handshakes ('formal' and 'informal') and two letters ('W' and 'S'). As a proof-of-concept, we conducted a user study with 16 participants, capturing subjective experience ratings and objective data for training machine learning classifiers. Our findings show that the four different gestures performed by moving the robot's end effector can be distinguished with close to 100% accuracy. Our research offers implications for the design of future HRI interfaces, suggesting that motion-based interaction can empower human operators to communicate directly with robots, removing the necessity for additional hardware.

- Pascher, Max; Zinta, Kevin; Gerken, Jens: Exploring of Discrete and Continuous Input Control for AI-enhanced Assistive Robotic Arms. In: Companion of the 2024 ACM/IEEE International Conference on Human-Robot Interaction (HRI '24 Companion), March 11--14, 2024, Boulder, CO, USA. ACM, Boulder, Colorado, USA, 2024. doi:10.1145/3610978.3640626KurzfassungPDF Details BIB Download

Robotic arms, integral in domestic care for individuals with motor impairments, enable them to perform Activities of Daily Living (ADLs) independently, reducing dependence on human caregivers. These collaborative robots require users to manage multiple Degrees-of-Freedom (DoFs) for tasks like grasping and manipulating objects. Conventional input devices, typically limited to two DoFs, necessitate frequent and complex mode switches to control individual DoFs. Modern adaptive controls with feed-forward multi-modal feedback reduce the overall task completion time, number of mode switches, and cognitive load. Despite the variety of input devices available, their effectiveness in adaptive settings with assistive robotics has yet to be thoroughly assessed. This study explores three different input devices by integrating them into an established XR framework for assistive robotics, evaluating them and providing empirical insights through a preliminary study for future developments.

- Pascher, Max: System and Method for Providing an Object-related Haptic Effect. German Patent and Trade Mark Office (DPMA), 2024. Details VolltextBIB Download

- Ivezić, Dijana; Keppel, Jonas; Horneber, David; Becker, Christine; Laumer, Sven; Walle, Hardy; Schneegass, Stefan; Amft, Oliver: EghiFit: Smartphone based Behaviour Monitoring and Health Recommendation in a Weight Loss Intervention Study. In: F1000Research, Jg.13 (2024), S. 1347. Details VolltextBIB Download

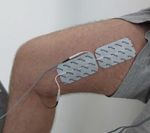

- Faltaous, Sarah; Williamson, Julie R.; Koelle, Marion; Pfeiffer, Max; Keppel, Jonas; Schneegass, Stefan: Understanding User Acceptance of Electrical Muscle Stimulation in Human-Computer Interaction. In: Proceedings of the 2024 CHI Conference on Human Factors in Computing Systems. Association for Computing Machinery, New York, NY, USA, 2024. doi:10.1145/3613904.3642585Kurzfassung Details BIB Download

Electrical Muscle Stimulation (EMS) has unique capabilities that can manipulate users’ actions or perceptions, such as actuating user movement while walking, changing the perceived texture of food, and guiding movements for a user learning an instrument. These applications highlight the potential utility of EMS, but such benefits may be lost if users reject EMS. To investigate user acceptance of EMS, we conducted an online survey (N = 101). We compared eight scenarios, six from HCI research applications and two from the sports and health domain. To gain further insights, we conducted in-depth interviews with a subset of the survey respondents (N = 10). The results point to the challenges and potential of EMS regarding social and technological acceptance, showing that there is greater acceptance of applications that manipulate action than those that manipulate perception. The interviews revealed safety concerns and user expectations for the design and functionality of future EMS applications.

- Keppel, Jonas; Ivezić, Dijana; Gruenefeld, Uwe; Lukowicz, Paul; Amft, Oliver; Schneegass, Stefan: AI and Health: Using Digital Twins to Foster Healthy Behavior. In: Mensch und Computer 2024-Workshopband. 2024, S. 10-18420. doi:10.18420/muc2024-mci-ws05-114 Details BIB Download

- Ivezić, Dijana; Keppel, Jonas; Schneegass, Stefan; Amft, Oliver: Patient Adherence and Challenges in a Weight Loss Study: Smartphone Data Stream and Gamification. Gesellschaft für Informatik e.V., 2024. doi:10.18420/muc2024-mci-ws05-222Kurzfassung Details BIB Download

This paper presents findings from our implementation of a context aware health guidance system for obese individuals, with a focus on smartphone- and smartwatch-based health monitoring and participant adherence. To aid participants in weight loss, our system utilizes data from wearables and smartphones, integrating nutrition tracking and gamification elements into a smartphone application and a web-based health dashboard for health coaches. Eight participants completed a 120-day field study to evaluate the system and examine user adherence to health monitoring and the effectiveness of gamification in a weight loss program. Data on steps, sleep, heart rate, weather, and manually logged meals were collected. Adherence varied across data types, with step counts being the most consistently collected, while sleep and heart rate data were limited due to inconsistent smartwatch usage.

- Liebers, Carina; Megarajan, Pranav; Auda, Jonas; Stratmann, Tim C; Pfingsthorn, Max; Gruenefeld, Uwe; Schneegass, Stefan: Keep the Human in the Loop: Arguments for Human Assistance in the Synthesis of Simulation Data for Robot Training. In: Multimodal Technologies and Interaction, Jg.8 (2024), S. 18. doi:10.3390/mti8030018KurzfassungPDF Details VolltextBIB Download

Robot training often takes place in simulated environments, particularly with reinforcement learning. Therefore, multiple training environments are generated using domain randomization to ensure transferability to real-world applications and compensate for unknown real-world states. We propose improving domain randomization by involving human application experts in various stages of the training process. Experts can provide valuable judgments on simulation realism, identify missing properties, and verify robot execution. Our human-in-the-loop workflow describes how they can enhance the process in five stages: validating and improving real-world scans, correcting virtual representations, specifying application-specific object properties, verifying and influencing simulation environment generation, and verifying robot training. We outline examples and highlight research opportunities. Furthermore, we present a case study in which we implemented different prototypes, demonstrating the potential of human experts in the given stages. Our early insights indicate that human input can benefit robot training at different stages.

- Liebers, Carina; Pfützenreuter, Niklas; Prochazka, Marvin; Megarajan, Pranav; Furuno, Eike; Löber, Jan; Stratmann, Tim C.; Auda, Jonas; Degraen, Donald; Gruenefeld, Uwe; Schneegass, Stefan: Look Over Here! Comparing Interaction Methods for User-Assisted Remote Scene Reconstruction. In: Extended Abstracts of the 2024 CHI Conference on Human Factors in Computing Systems. Association for Computing Machinery, New York, NY, USA, 2024. doi:10.1145/3613905.3650982KurzfassungPDF Details BIB Download

Detailed digital representations of physical scenes are key in many cases, such as historical site preservation or hazardous area inspection. To automate the capturing process, robots or drones mounted with sensors can algorithmically record the environment from different viewpoints. However, environmental complexities often lead to incomplete captures. We believe humans can support scene capture as their contextual understanding enables easy identification of missing areas and recording errors. Therefore, they need to perceive the recordings and suggest new sensor poses. In this work, we compare two human-centric approaches in Virtual Reality for scene reconstruction through the teleoperation of a remote robot arm, i.e., directly providing sensor poses (direct method) or specifying missing areas in the scans (indirect method). Our results show that directly providing sensor poses leads to higher efficiency and user experience. In future work, we aim to compare the quality of human assistance to automatic approaches.

- Liebers, Carina; Pfützenreuter, Niklas; Auda, Jonas; Gruenefeld, Uwe; Schneegass, Stefan: "Computer, Generate!” – Investigating User Controlled Generation of Immersive Virtual Environments. In: HHAI 2024: Hybrid Human AI Systems for the Social Good. IOS Press, Malmö, 2024, S. 213-227. doi:10.3233/FAIA240196KurzfassungPDF Details VolltextBIB Download

For immersive experiences such as virtual reality, explorable worlds are often fundamental. Generative artificial intelligence looks promising to accelerate the creation of such environments. However, it remains unclear how existing interaction modalities can support user-centered world generation and how users remain in control of the process. Thus, in this paper, we present a virtual reality application to generate virtual environments and compare three common interaction modalities (voice, controller, and hands) in a pre-study (N = 18), revealing a combination of initial voice input and continued controller manipulation as best suitable. We then investigate three levels of process control (all-at-once, creation-before-manipulation, and step-by-step) in a user study (N = 27). Our results show that although all-at-once reduced the number of object manipulations, participants felt more in control when using the step-by-step approach.

- Saad, Alia; Pascher, Max; Kassem, Khaled; Heger, Roman; Liebers, Jonathan; Schneegass, Stefan; Gruenefeld, Uwe: Hand-in-Hand: Investigating Mechanical Tracking for User Identification in Cobot Interaction. In: Proceedings of International Conference on Mobile and Ubiquitous Multimedia (MUM). Vienna, Austria, 2023. doi:10.1145/3626705.3627771KurzfassungPDF Details BIB Download

Robots play a vital role in modern automation, with applications in manufacturing and healthcare. Collaborative robots integrate human and robot movements. Therefore, it is essential to ensure that interactions involve qualified, and thus identified, individuals. This study delves into a new approach: identifying individuals through robot arm movements. Different from previous methods, users guide the robot, and the robot senses the movements via joint sensors. We asked 18 participants to perform six gestures, revealing the potential use as unique behavioral traits or biometrics, achieving F1-score up to 0.87, which suggests direct robot interactions as a promising avenue for implicit and explicit user identification.

- Liebers, Jonathan; Burschik, Christian; Gruenefeld, Uwe; Schneegass, Stefan: Exploring the Stability of Behavioral Biometrics in Virtual Reality in a Remote Field Study: Towards Implicit and Continuous User Identification through Body Movements. In: Proceedings of the 29th ACM Symposium on Virtual Reality Software and Technology. Association for Computing Machinery, New York, NY, USA, 2023. doi:10.1145/3611659.3615696Kurzfassung Details BIB Download

Behavioral biometrics has recently become a viable alternative method for user identification in Virtual Reality (VR). Its ability to identify users based solely on their implicit interaction allows for high usability and removes the burden commonly associated with security mechanisms. However, little is known about the temporal stability of behavior (i.e., how behavior changes over time), as most previous works were evaluated in highly controlled lab environments over short periods. In this work, we present findings obtained from a remote field study (N = 15) that elicited data over a period of eight weeks from a popular VR game. We found that there are changes in people’s behavior over time, but that two-session identification still is possible with a mean F1-score of up to 71%, while an initial training yields 86%. However, we also see that performance can drop by up to over 50 percentage points when testing with later sessions, compared to the first session, particularly for smaller groups. Thus, our findings indicate that the use of behavioral biometrics in VR is convenient for the user and practical with regard to changing behavior and also reliable regarding behavioral variation.

- Auda, Jonas; Grünefeld, Uwe; Faltaous, Sarah; Mayer, Sven; Schneegass, Stefan: A Scoping Survey on Cross-reality Systems. In: ACM Computing Surveys. 2023. doi:10.1145/3616536Kurzfassung Details BIB Download

Immersive technologies such as Virtual Reality (VR) and Augmented Reality (AR) empower users to experience digital realities. Known as distinct technology classes, the lines between them are becoming increasingly blurry with recent technological advancements. New systems enable users to interact across technology classes or transition between them—referred to as cross-reality systems. Nevertheless, these systems are not well understood. Hence, in this article, we conducted a scoping literature review to classify and analyze cross-reality systems proposed in previous work. First, we define these systems by distinguishing three different types. Thereafter, we compile a literature corpus of 306 relevant publications, analyze the proposed systems, and present a comprehensive classification, including research topics, involved environments, and transition types. Based on the gathered literature, we extract nine guiding principles that can inform the development of cross-reality systems. We conclude with research challenges and opportunities.

- Auda, Jonas; Grünefeld, Uwe; Mayer, Sven; Faltaous, Sarah; Schneegass, Stefan: The Actuality-Time Continuum: Visualizing Interactions and Transitions Taking Place in Cross-Reality Systems. In: IEEE ISMAR 2023. Sydney, 2023. Kurzfassung Details VolltextBIB Download

In the last decade, researchers contributed an increasing number of cross-reality systems and their evaluations. Going beyond individual technologies such as Virtual or Augmented Reality, these systems introduce novel approaches that help to solve relevant problems such as the integration of bystanders or physical objects. However, cross-reality systems are complex by nature, and describing the interactions and transitions taking place is a challenging task. Thus, in this paper, we propose the idea of the Actuality-Time Continuum that aims to enable researchers and designers alike to visualize complex cross-reality experiences. Moreover, we present four visualization examples that illustrate the potential of our proposal and conclude with an outlook on future perspectives.

- Keppel, Jonas; Strauss, Marvin; Faltaous, Sarah; Liebers, Jonathan; Heger, Roman; Gruenefeld, Uwe; Schneegass, Stefan: Don't Forget to Disinfect: Understanding Technology-Supported Hand Disinfection Stations. In: Proc. ACM Hum.-Comput. Interact., Jg.7 (2023). doi:10.1145/3604251KurzfassungPDF Details BIB Download

The global COVID-19 pandemic created a constant need for hand disinfection. While it is still essential, disinfection use is declining with the decrease in perceived personal risk (e.g., as a result of vaccination). Thus this work explores using different visual cues to act as reminders for hand disinfection. We investigated different public display designs using (1) paper-based only, adding (2) screen-based, or (3) projection-based visual cues. To gain insights into these designs, we conducted semi-structured interviews with passersby (N=30). Our results show that the screen- and projection-based conditions were perceived as more engaging. Furthermore, we conclude that the disinfection process consists of four steps that can be supported: drawing attention to the disinfection station, supporting the (subconscious) understanding of the interaction, motivating hand disinfection, and performing the action itself. We conclude with design implications for technology-supported disinfection.

- Pascher, Max; Kronhardt, Kirill; Goldau, Felix Ferdinand; Frese, Udo; Gerken, Jens: In Time and Space: Towards Usable Adaptive Control for Assistive Robotic Arms. In: RO-MAN 2023 - IEEE International Conference on Robot and Human Interactive Communication. IEEE, Busan, Korea, 2023, S. 2300-2307. doi:10.1109/RO-MAN57019.2023.10309381KurzfassungPDF Details BIB Download

Robotic solutions, in particular robotic arms, are becoming more frequently deployed for close collaboration with humans, for example in manufacturing or domestic care environments. These robotic arms require the user to control several Degrees-of-Freedom (DoFs) to perform tasks, primarily involving grasping and manipulating objects. Standard input devices predominantly have two DoFs, requiring time-consuming and cognitively demanding mode switches to select individual DoFs. Contemporary Adaptive DoF Mapping Controls (ADMCs) have shown to decrease the necessary number of mode switches but were up to now not able to significantly reduce the perceived workload. Users still bear the mental workload of incorporating abstract mode switching into their workflow. We address this by providing feed-forward multimodal feedback using updated recommendations of ADMC, allowing users to visually compare the current and the suggested mapping in real-time. We contrast the effectiveness of two new approaches that a) continuously recommend updated DoF combinations or b) use discrete thresholds between current robot movements and new recommendations. Both are compared in a Virtual Reality (VR) in-person study against a classic control method. Significant results for lowered task completion time, fewer mode switches, and reduced perceived workload conclusively establish that in combination with feedforward, ADMC methods can indeed outperform classic mode switching. A lack of apparent quantitative differences between Continuous and Threshold reveals the importance of user-centered customization options. Including these implications in the development process will improve usability, which is essential for successfully implementing robotic technologies with high user acceptance.

- Liebers, Carina; Prochazka, Marvin; Pfützenreuter, Niklas; Liebers, Jonathan; Auda, Jonas; Gruenefeld, Uwe; Schneegass, Stefan: Pointing It out! Comparing Manual Segmentation of 3D Point Clouds between Desktop, Tablet, and Virtual Reality. In: International Journal of Human–Computer Interaction (2023), S. 1-15. doi:10.1080/10447318.2023.2238945KurzfassungPDF Details VolltextBIB Download

Scanning everyday objects with depth sensors is the state-of-the-art approach to generating point clouds for realistic 3D representations. However, the resulting point cloud data suffers from outliers and contains irrelevant data from neighboring objects. To obtain only the desired 3D representation, additional manual segmentation steps are required. In this paper, we compare three different technology classes as independent variables (desktop vs. tablet vs. virtual reality) in a within-subject user study (N = 18) to understand their effectiveness and efficiency for such segmentation tasks. We found that desktop and tablet still outperform virtual reality regarding task completion times, while we could not find a significant difference between them in the effectiveness of the segmentation. In the post hoc interviews, participants preferred the desktop due to its familiarity and temporal efficiency and virtual reality due to its given three-dimensional representation.

- Abdrabou, Yasmeen; Rivu, Sheikh Radiah; Ammar, Tarek; Liebers, Jonathan; Saad, Alia; Liebers, Carina; Gruenefeld, Uwe; Knierim, Pascal; Khamis, Mohamed; Makela, Ville; Schneegass, Stefan: Understanding Shoulder Surfer Behavior and Attack Patterns Using Virtual Reality. In: Proceedings of the 2022 International Conference on Advanced Visual Interfaces, Jg.2022 (2023), S. 1-9. doi:10.1145/3531073.3531106PDF Details VolltextBIB Download

- Liebers, Carina; Agarwal, Shivam; Beck, Fabian: CohExplore: Visually Supporting Students in Exploring Text Cohesion. In: Gillmann, Christina; Krone, Michael; Lenti, Simone (Hrsg.): EuroVis 2023 - Posters. The Eurographics Association, 2023. doi:10.2312/evp.20231058KurzfassungPDF Details BIB Download

A cohesive text allows readers to follow the described ideas and events. Exploring cohesion in text might aid students enhancing their academic writing. We introduce CohExplore, which promotes exploring and reflecting on cohesion of a given text by visualizing computed cohesion related metrics on an overview and detailed level. Detected topics are color-coded, semantic similarity is shown via lines, while connectives and co-references in a paragraph are encoded using text decoration. Demonstrating the system, we share insights about a student-authored text.

- Liebers, Carina; Agarwal, Shivam; Krug, Maximilian; Pitsch, Karola; Beck, Fabian: VisCoMET: Visually Analyzing Team Collaboration in Medical Emergency Trainings. In: Computer Graphics Forum (2023). doi:10.1111/cgf.14819KurzfassungPDF Details BIB Download

Handling emergencies requires efficient and effective collaboration of medical professionals. To analyze their performance, in an application study, we have developed VisCoMET, a visual analytics approach displaying interactions of healthcare personnel in a triage training of a mass casualty incident. The application scenario stems from social interaction research, where the collaboration of teams is studied from different perspectives. We integrate recorded annotations from multiple sources, such as recorded videos of the sessions, transcribed communication, and eye-tracking information. For each session, an informationrich timeline visualizes events across these different channels, specifically highlighting interactions between the team members. We provide algorithmic support to identify frequent event patterns and to search for user-defined event sequences. Comparing different teams, an overview visualization aggregates each training session in a visual glyph as a node, connected to similar sessions through edges. An application example shows the usage of the approach in the comparative analysis of triage training sessions, where multiple teams encountered the same scene, and highlights discovered insights. The approach was evaluated through feedback from visualization and social interaction experts. The results show that the approach supports reflecting on teams’ performance by exploratory analysis of collaboration behavior while particularly enabling the comparison of triage training sessions.

- Pascher, Max; Grünefeld, Uwe; Schneegass, Stefan; Gerken, Jens: How to Communicate Robot Motion Intent: A Scoping Review. In: Acm (Hrsg.): Proceedings of the 2023 CHI Conference on Human Factors in Computing Systems (CHI ’23). 2023. doi:10.1145/3544548.3580857KurzfassungPDF Details VolltextBIB Download

Robots are becoming increasingly omnipresent in our daily lives, supporting us and carrying out autonomous tasks. In Human-Robot Interaction, human actors benefit from understanding the robot's motion intent to avoid task failures and foster collaboration. Finding effective ways to communicate this intent to users has recently received increased research interest. However, no common language has been established to systematize robot motion intent. This work presents a scoping review aimed at unifying existing knowledge. Based on our analysis, we present an intent communication model that depicts the relationship between robot and human through different intent dimensions (intent type, intent information, intent location). We discuss these different intent dimensions and their interrelationships with different kinds of robots and human roles. Throughout our analysis, we classify the existing research literature along our intent communication model, allowing us to identify key patterns and possible directions for future research.

- Pascher, Max; Franzen, Til; Kronhardt, Kirill; Grünefeld, Uwe; Schneegass, Stefan; Gerken, Jens: HaptiX: Vibrotactile Haptic Feedback for Communication of 3D Directional Cues. In: Acm (Hrsg.): Proceedings of the 2023 CHI Conference on Human Factors in Computing Systems - Extended Abstract (CHI ’23). 2023. doi:10.1145/3544549.3585601KurzfassungPDF Details VolltextBIB Download

In Human-Computer-Interaction, vibrotactile haptic feedback offers the advantage of being independent of any visual perception of the environment. Most importantly, the user's field of view is not obscured by user interface elements, and the visual sense is not unnecessarily strained. This is especially advantageous when the visual channel is already busy, or the visual sense is limited. We developed three design variants based on different vibrotactile illusions to communicate 3D directional cues. In particular, we explored two variants based on the vibrotactile illusion of the cutaneous rabbit and one based on apparent vibrotactile motion. To communicate gradient information, we combined these with pulse-based and intensity-based mapping. A subsequent study showed that the pulse-based variants based on the vibrotactile illusion of the cutaneous rabbit are suitable for communicating both directional and gradient characteristics. The results further show that a representation of 3D directions via vibrations can be effective and beneficial.

- Tarner, Hagen; Beck, Fabian: Visualizing Runtime Evolution Paths in a Multidimensional Space (Work In Progress Paper). In: Companion of the 2023 ACM/SPEC International Conference on Performance Engineering. ACM, Coimbra, Portugal, 2023. doi:10.1145/3578245.3585031KurzfassungPDF Details BIB Download

Runtime data of software systems is often of multivariate nature, describing different aspects of performance among other characteristics, and evolves along different versions or changes depending on the execution context. This poses a challenge for visualizations, which are typically only two- or three-dimensional. Using dimensionality reduction, we project the multivariate runtime data to 2D and visualize the result in a scatter plot. To show changes over time, we apply the projection to multiple timestamps and connect temporally adjacent points to form trajectories. This allows for cluster and outlier detection, analysis of co-evolution, and finding temporal patterns. While projected temporal trajectories have been applied to other domains before, we use it to visualize software evolution and execution context changes as evolution paths. We experiment with and report results of two application examples: (I) the runtime evolution along different versions of components from the Apache Commons project, and (II) a benchmark suite from scientific visualization comparing different rendering techniques along camera paths.

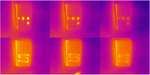

- Saad, Alia; Izadi, Kian; Ahmad Khan, Anam; Knierim, Pascal; Schneegass, Stefan; Alt, Florian; Abdelrahman, Yomna: HotFoot: Foot-Based User Identification Using Thermal Imaging. In: Proceedings of the 2023 CHI Conference on Human Factors in Computing Systems. Association for Computing Machinery, New York, NY, USA, 2023. doi:10.1145/3544548.3580924Kurzfassung Details BIB Download

We propose a novel method for seamlessly identifying users by combining thermal and visible feet features. While it is known that users’ feet have unique characteristics, these have so far been underutilized for biometric identification, as observing those features often requires the removal of shoes and socks. As thermal cameras are becoming ubiquitous, we foresee a new form of identification, using feet features and heat traces to reconstruct the footprint even while wearing shoes or socks. We collected a dataset of users’ feet (N = 21), wearing three types of footwear (personal shoes, standard shoes, and socks) on three floor types (carpet, laminate, and linoleum). By combining visual and thermal features, an AUC between 91.1% and 98.9%, depending on floor type and shoe type can be achieved, with personal shoes on linoleum floor performing best. Our findings demonstrate the potential of thermal imaging for continuous and unobtrusive user identification.

- Escobar, Ronald; Sandoval Alcocer, Juan Pablo; Tarner, Hagen; Beck, Fabian; Bergel, Alexandre: Spike – A code editor plugin highlighting fine-grained changes. In: Working Conference on Software Visualization (VISSOFT). Limassol, Cyprus, 2022. doi:10.1109/VISSOFT55257.2022.00026Kurzfassung Details BIB Download

Information about source code changes is important for many software development activities. As such, modern IDEs, including, \emph{IntelliJ IDEA} and \emph{Visual Studio Code}, show visual clues within the code editor that highlight lines that have been changed since the last synchronization with the code repository. However, the granularity of the change information is limited to a line level, showing mainly a small colored icon on the left side of the lines that have been added, deleted, or modified.

This paper introduces Spike, a source code highlighting plugin that uses the font color to visually encode fine-grained version difference information within the code editor. In contrast to previously mentioned tools, Spike can highlight insertions, deletions, updates, and refactorings all in a same line. Our plugin also enriches the source code with small icons that allow retrieving detailed information about a given code change. We perform an exploratory user study with five professional software engineers. Our results show that our approach is able to assist practitioners with complex comprehension tasks about software history within the code editor.

- Tarner, Hagen; Bruder, Valentin; Frey, Steffen; Ertl, Thomas; Beck, Fabian: Visually Comparing Rendering Performance from Multiple Perspectives. In: Bender, Jan; Botsch, Mario; Keim, Daniel A. (Hrsg.): Vision, Modeling, and Visualization. The Eurographics Association, Konstanz, 2022. doi:10.2312/vmv.20221211KurzfassungPDF Details BIB Download

Evaluation of rendering performance is crucial when selecting or developing algorithms, but challenging as performance can largely differ across a set of selected scenarios. Despite this, performance metrics are often reported and compared in a highly aggregated way. In this paper we suggest a more fine-grained approach for the evaluation of rendering performance, taking into account multiple perspectives on the scenario: camera position and orientation along different paths, rendering algorithms, image resolution, and hardware. The approach comprises a visual analysis system that shows and contrasts the data from these perspectives. The users can explore combinations of perspectives and gain insight into the performance characteristics of several rendering algorithms. A stylized representation of the camera path provides a base layout for arranging the multivariate performance data as radar charts, each comparing the same set of rendering algorithms while linking the performance data with the rendered images. To showcase our approach, we analyze two types of scientific visualization benchmarks.

- Keppel, Jonas; Gruenefeld, Uwe; Strauss, Marvin; Gonzalez, Luis Ignacio Lopera; Amft, Oliver; Schneegass, Stefan: Reflecting on Approaches to Monitor User's Dietary Intake. MobileHCI 2022, Vancouver, Canada, 2022. KurzfassungPDF Details VolltextBIB Download

Monitoring dietary intake is essential to providing user feedback and achieving a healthier lifestyle. In the past, different approaches for monitoring dietary behavior have been proposed. In this position paper, we first present an overview of the state-of-the-art techniques grouped by image- and sensor-based approaches. After that, we introduce a case study in which we present a Wizard-of-Oz approach as an alternative and non-automatic monitoring method.

- Keppel, Jonas; Öztürk, Alper; Herbst, Jean-Luc; Lewin, Stefan: Artificial Conscience - Fight the Inner Couch Potato. MobileHCI 2022, Vancouver, Canada, 2022. KurzfassungPDF Details VolltextBIB Download

The Artificial Conscience concept aims to improve the user’s quality of life by giving recommendations for a healthier lifestyle and reacting to possibly harmful situations detected by the various sensors of the Huawei Eyewear. But autonomous reactions to situations that pose an immediate danger to the user’s health as well as methods for habit-forming and other supporting functions are only representing a subset of the possible design space. All functions of this concept are described and evaluated individually, both under the assumption of autonomous operation of the Huawei Eyewear and with the inclusion of other data sources and sensors (smartphone, smartwatch). In addition, an outlook is given on additional features for the Huawei Eyewear that could be implemented in future versions of the glasses. YouTube

- Detjen, Henrik; Faltaous, Sarah; Keppel, Jonas; Prochazka, Marvin; Gruenefeld, Uwe; Sadeghian, Shadan; Schneegass, Stefan: Investigating the Influence of Gaze- and Context-Adaptive Head-up Displays on Take-Over Requests. In: Acm (Hrsg.): AutomotiveUI '22: Proceedings of the 14th International Conference on Automotive User Interfaces and Interactive Vehicular Applications. 2022. doi:10.1145/3543174.3546089Kurzfassung Details VolltextBIB Download

In Level 3 automated vehicles, preparing drivers for take-over requests (TORs) on the head-up display (HUD) requires their repeated attention. Visually salient HUD elements can distract attention from potentially critical parts in a driving scene during a TOR. Further, attention is (a) meanwhile needed for non-driving-related activities and can (b) be over-requested. In this paper, we conduct a driving simulator study (N=12), varying required attention by HUD warning presence (absent vs. constant vs. TOR-only) across gaze-adaptivity (with vs. without) to fit warnings to the situation. We found that (1) drivers value visual support during TORs, (2) gaze-adaptive scene complexity reduction works but creates a benefit-neutralizing distraction for some, and (3) drivers perceive constant HUD warnings as annoying and distracting over time. Our findings highlight the need for (a) HUD adaptation based on user activities and potential TORs and (b) sparse use of warning cues in future HUD designs.

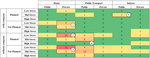

- Schneegass, Stefan; Saad, Alia; Heger, Roman; Delgado Rodriguez, Sarah; Poguntke, Romina; Alt, Florian: An Investigation of Shoulder Surfing Attacks on Touch-Based Unlock Events. In: Proc. ACM Hum.-Comput. Interact., Jg.6 (2022). doi:10.1145/3546742Kurzfassung Details BIB Download

This paper contributes to our understanding of user-centered attacks on smartphones. In particular, we investigate the likelihood of so-called shoulder surfing attacks during touch-based unlock events and provide insights into users' views and perceptions. To do so, we ran a two-week in-the-wild study (N=12) in which we recorded images with a 180-degree field of view lens that was mounted on the smartphone's front-facing camera. In addition, we collected contextual information and allowed participants to assess the situation. We found that only a small fraction of shoulder surfing incidents that occur during authentication are actually perceived as threatening. Furthermore, our findings suggest that our notions of (un)safe places need to be rethought. Our work is complemented by a discussion of implications for future user-centered attack-aware systems. This work can serve as a basis for usable security researchers to better design systems against user-centered attacks.

- Faltaous, Sarah; Prochazka, Marvin; Auda, Jonas; Keppel, Jonas; Wittig, Nick; Gruenefeld, Uwe; Schneegass, Stefan: Give Weight to VR: Manipulating Users’ Perception of Weight in Virtual Reality with Electric Muscle Stimulation. Association for Computing Machinery, New York, NY, USA, 2022. doi:10.1145/3543758.3547571Kurzfassung Details BIB Download

Virtual Reality (VR) devices empower users to experience virtual worlds through rich visual and auditory sensations. However, believable haptic feedback that communicates the physical properties of virtual objects, such as their weight, is still unsolved in VR. The current trend towards hand tracking-based interactions, neglecting the typical controllers, further amplifies this problem. Hence, in this work, we investigate the combination of passive haptics and electric muscle stimulation to manipulate users’ perception of weight, and thus, simulate objects with different weights. In a laboratory user study, we investigate four differing electrode placements, stimulating different muscles, to determine which muscle results in the most potent perception of weight with the highest comfort. We found that actuating the biceps brachii or the triceps brachii muscles increased the weight perception of the users. Our findings lay the foundation for future investigations on weight perception in VR.

- Grünefeld, Uwe; Geilen, Alexander; Liebers, Jonathan; Wittig, Nick; Koelle, Marion; Schneegass, Stefan: ARm Haptics: 3D-Printed Wearable Haptics for Mobile Augmented Reality. In: Proc. ACM Hum.-Comput. Interact., Jg.6 (2022). doi:10.1145/3546728Kurzfassung Details BIB Download

Augmented Reality (AR) technology enables users to superpose virtual content onto their environments. However, interacting with virtual content while mobile often requires users to perform interactions in mid-air, resulting in a lack of haptic feedback. Hence, in this work, we present the ARm Haptics system, which is worn on the user's forearm and provides 3D-printed input modules, each representing well-known interaction components such as buttons, sliders, and rotary knobs. These modules can be changed quickly, thus allowing users to adapt them to their current use case. After an iterative development of our system, which involved a focus group with HCI researchers, we conducted a user study to compare the ARm Haptics system to hand-tracking-based interaction in mid-air (baseline). Our findings show that using our system results in significantly lower error rates for slider and rotary input. Moreover, use of the ARm Haptics system results in significantly higher pragmatic quality and lower effort, frustration, and physical demand. Following our findings, we discuss opportunities for haptics worn on the forearm.

- Pascher, Max; Kronhardt, Kirill; Franzen, Til; Gerken, Jens: Adaptive DoF: Concepts to Visualize AI-generated Movements in Human-Robot Collaboration. In: Proceedings of the 2022 International Conference on Advanced Visual Interfaces (AVI 2022). ACM, NewYork, NY, USA, 2022. doi:10.1145/3531073.3534479Kurzfassung Details BIB Download

Nowadays, robots collaborate closely with humans in a growing number of areas. Enabled by lightweight materials and safety sensors , these cobots are gaining increasing popularity in domestic care, supporting people with physical impairments in their everyday lives. However, when cobots perform actions autonomously, it remains challenging for human collaborators to understand and predict their behavior. This, however, is crucial for achieving trust and user acceptance. One significant aspect of predicting cobot behavior is understanding their motion intent and comprehending how they "think" about their actions. We work on solutions that communicate the cobots AI-generated motion intent to a human collaborator. Effective communication enables users to proceed with the most suitable option. We present a design exploration with different visualization techniques to optimize this user understanding, ideally resulting in increased safety and end-user acceptance.

- Grünefeld, Uwe; Auda, Jonas; Mathis, Florian; Schneegass, Stefan; Khamis, Mohamed; Gugenheimer, Jan; Mayer, Sven: VRception: Rapid Prototyping of Cross-Reality Systems in Virtual Reality. In: Proceedings of the 41st ACM Conference on Human Factors in Computing Systems (CHI). Association for Computing Machinery, New Orleans, United States, 2022. doi:10.1145/3491102.3501821Kurzfassung Details BIB Download

Cross-reality systems empower users to transition along the realityvirtuality continuum or collaborate with others experiencing different manifestations of it. However, prototyping these systems is challenging, as it requires sophisticated technical skills, time, and often expensive hardware. We present VRception, a concept and toolkit for quick and easy prototyping of cross-reality systems. By simulating all levels of the reality-virtuality continuum entirely in Virtual Reality, our concept overcomes the asynchronicity of realities, eliminating technical obstacles. Our VRception Toolkit leverages this concept to allow rapid prototyping of cross-reality systems and easy remixing of elements from all continuum levels. We replicated six cross-reality papers using our toolkit and presented them to their authors. Interviews with them revealed that our toolkit sufficiently replicates their core functionalities and allows quick iterations. Additionally, remote participants used our toolkit in pairs to collaboratively implement prototypes in about eight minutes that they would have otherwise expected to take days.

- Auda, Jonas; Grünefeld, Uwe; Schneegass, Stefan: If The Map Fits! Exploring Minimaps as Distractors from Non-Euclidean Spaces in Virtual Reality. In: CHI 22. ACM, 2022. doi:10.1145/3491101.3519621 Details BIB Download

- Abdrabou, Yasmeen; Rivu, Radiah; Ammar, Tarek; Liebers, Jonathan; Saad, Alia; Liebers, Carina; Gruenefeld, Uwe; Knierim, Pascal; Khamis, Mohamed; Mäkelä, Ville; Schneegass, Stefan; Alt, Florian: Understanding Shoulder Surfer Behavior Using Virtual Reality. In: Proceedings of the IEEE conference on Virtual Reality and 3D User Interfaces (IEEE VR). IEEE, Christchurch, New Zealand, 2022. Kurzfassung Details BIB Download

We explore how attackers behave during shoulder surfing. Unfortunately, such behavior is challenging to study as it is often opportunistic and can occur wherever potential attackers can observe other people’s private screens. Therefore, we investigate shoulder surfing using virtual reality (VR). We recruited 24 participants and observed their behavior in two virtual waiting scenarios: at a bus stop and in an open office space. In both scenarios, avatars interacted with private screens displaying different content, thus providing opportunities for shoulder surfing. From the results, we derive an understanding of factors influencing shoulder surfing behavior.

- Auda, Jonas; Grünefeld, Uwe; Kosch, Thomas; Schneegass, Stefan: The Butterfly Effect: Novel Opportunities for Steady-State Visually-Evoked Potential Stimuli in Virtual Reality. In: Researchgate (Hrsg.): Augmented Humans. Kashiwa, Chiba, Japan, 2022. doi:10.1145/3519391.3519397 Details BIB Download

- Pascher, Max; Kronhardt, Kirill; Franzen, Til; Gruenefeld, Uwe; Schneegass, Stefan; Gerken, Jens: My Caregiver the Cobot: Comparing Visualization Techniques to Effectively Communicate Cobot Perception to People with Physical Impairments. In: MDPI Sensors, Jg.22 (2022). doi:10.3390/s22030755Kurzfassung Details VolltextBIB Download

Nowadays, robots are found in a growing number of areas where they collaborate closely with humans. Enabled by lightweight materials and safety sensors, these cobots are gaining increasing popularity in domestic care, where they support people with physical impairments in their everyday lives. However, when cobots perform actions autonomously, it remains challenging for human collaborators to understand and predict their behavior, which is crucial for achieving trust and user acceptance. One significant aspect of predicting cobot behavior is understanding their perception and comprehending how they "see" the world. To tackle this challenge, we compared three different visualization techniques for Spatial Augmented Reality. All of these communicate cobot perception by visually indicating which objects in the cobot's surrounding have been identified by their sensors. We compared the well-established visualizations Wedge and Halo against our proposed visualization Line in a remote user experiment with participants suffering from physical impairments. In a second remote experiment, we validated these findings with a broader non-specific user base. Our findings show that Line, a lower complexity visualization, results in significantly faster reaction times compared to Halo, and lower task load compared to both Wedge and Halo. Overall, users prefer Line as a more straightforward visualization. In Spatial Augmented Reality, with its known disadvantage of limited projection area size, established off-screen visualizations are not effective in communicating cobot perception and Line presents an easy-to-understand alternative.

- Kronhardt, Kirill; Rübner, Stephan; Pascher, Max; Goldau, Felix Ferdinand; Frese, Udo; Gerken, Jens: Adapt or Perish? Exploring the Effectiveness of Adaptive DoF Control Interaction Methods for Assistive Robot Arms. In: Technologies, Jg.10 (2022). doi:10.3390/technologies10010030Kurzfassung Details VolltextBIB Download

Robot arms are one of many assistive technologies used by people with motor impairments. Assistive robot arms can allow people to perform activities of daily living (ADL) involving grasping and manipulating objects in their environment without the assistance of caregivers. Suitable input devices (e.g., joysticks) mostly have two Degrees of Freedom (DoF), while most assistive robot arms have six or more. This results in time-consuming and cognitively demanding mode switches to change the mapping of DoFs to control the robot. One option to decrease the difficulty of controlling a high-DoF assistive robot arm using a low-DoF input device is to assign different combinations of movement-DoFs to the device\’s input DoFs depending on the current situation (adaptive control). To explore this method of control, we designed two adaptive control methods for a realistic virtual 3D environment. We evaluated our methods against a commonly used non-adaptive control method that requires the user to switch controls manually. This was conducted in a simulated remote study that used Virtual Reality and involved 39 non-disabled participants. Our results show that the number of mode switches necessary to complete a simple pick-and-place task decreases significantly when using an adaptive control type. In contrast, the task completion time and workload stay the same. A thematic analysis of qualitative feedback of our participants suggests that a longer period of training could further improve the performance of adaptive control methods.

- Liebers, Jonathan; Brockel, Sascha; Gruenefeld, Uwe; Schneegass, Stefan: Identifying Users by Their Hand Tracking Data in Augmented and Virtual Reality. In: International Journal of Human–Computer Interaction (2022). doi:10.1080/10447318.2022.2120845KurzfassungPDF Details BIB Download

Nowadays, Augmented and Virtual Reality devices are widely available and are often shared among users due to their high cost. Thus, distinguishing users to offer personalized experiences is essential. However, currently used explicit user authentication(e.g., entering a password) is tedious and vulnerable to attack. Therefore, this work investigates the feasibility of implicitly identifying users by their hand tracking data. In particular, we identify users by their uni- and bimanual finger behavior gathered from their interaction with eight different universal interface elements, such as buttons and sliders. In two sessions, we recorded the tracking data of 16 participants while they interacted with various interface elements in Augmented and Virtual Reality. We found that user identification is possible with up to 95 % accuracy across sessions using an explainable machine learning approach. We conclude our work by discussing differences between interface elements, and feature importance to provide implications for behavioral biometric systems.

- Keppel, Jonas; Liebers, Jonathan; Auda, Jonas; Gruenefeld, Uwe; Schneegass, Stefan: ExplAInable Pixels: Investigating One-Pixel Attacks on Deep Learning Models with Explainable Visualizations. In: Proceedings of the 21st International Conference on Mobile and Ubiquitous Multimedia. Association for Computing Machinery, New York, NY, USA, 2022, S. 231-242. doi:10.1145/3568444.3568469Kurzfassung Details BIB Download

Nowadays, deep learning models enable numerous safety-critical applications, such as biometric authentication, medical diagnosis support, and self-driving cars. However, previous studies have frequently demonstrated that these models are attackable through slight modifications of their inputs, so-called adversarial attacks. Hence, researchers proposed investigating examples of these attacks with explainable artificial intelligence to understand them better. In this line, we developed an expert tool to explore adversarial attacks and defenses against them. To demonstrate the capabilities of our visualization tool, we worked with the publicly available CIFAR-10 dataset and generated one-pixel attacks. After that, we conducted an online evaluation with 16 experts. We found that our tool is usable and practical, providing evidence that it can support understanding, explaining, and preventing adversarial examples.

- Liebers, Jonathan; Horn, Patrick; Burschik, Christian; Gruenefeld, Uwe; Schneegass, Stefan: Using Gaze Behavior and Head Orientation for Implicit Identification in Virtual Reality. In: Proceedings of the 27th ACM Symposium on Virtual Reality Software and Technology (VRST). Association for Computing Machinery, Osaka, Japan, 2021. doi:10.1145/3489849.3489880Kurzfassung Details BIB Download

Identifying users of a Virtual Reality (VR) headset provides designers of VR content with the opportunity to adapt the user interface, set user-specific preferences, or adjust the level of difficulty either for games or training applications. While most identification methods currently rely on explicit input, implicit user identification is less disruptive and does not impact the immersion of the users. In this work, we introduce a biometric identification system that employs the user’s gaze behavior as a unique, individual characteristic. In particular, we focus on the user’s gaze behavior and head orientation while following a moving stimulus. We verify our approach in a user study. A hybrid post-hoc analysis results in an identification accuracy of up to 75% for an explainable machine learning algorithm and up to 100% for a deep learning approach. We conclude with discussing application scenarios in which our approach can be used to implicitly identify users.

- Auda, Jonas; Mayer, Sven; Verheyen, Nils; Schneegass, Stefan: Flyables: Haptic Input Devices for Virtual Reality using Quadcopters. In: ResearchGate (Hrsg.): VRST. 2021. doi:10.1145/3489849.3489855 Details BIB Download

- Latif, Shahid; Agarwal, Shivam; Gottschalk, Simon; Chrosch, Carina; Feit, Felix; Jahn, Johannes; Braun, Tobias; Tchenko, Yanick Christian; Demidova, Elena; Beck, Fabian: Visually Connecting Historical Figures Through Event Knowledge Graphs. In: 2021 IEEE Visualization Conference (VIS) - Short Papers. IEEE, 2021. doi:10.1109/VIS49827.2021.9623313Kurzfassung Details BIB Download

Knowledge graphs store information about historical figures and their relationships indirectly through shared events. We developed a visualization system, VisKonnect, for analyzing the intertwined lives of historical figures based on the events they participated in. A user’s query is parsed for identifying named entities, and related data is retrieved from an event knowledge graph. While a short textual answer to the query is generated using the GPT-3 language model, various linked visualizations provide context, display additional information related to the query, and allow exploration.

- Auda, Jonas; Grünefeld, Uwe; Pfeuffer, Ken; Rivu, Radiah; Florian, Alt; Schneegass, Stefan: I'm in Control! Transferring Object Ownership Between Remote Users with Haptic Props in Virtual Reality. In: Proceedings of the 9th ACM Symposium on Spatial User Interaction (SUI). Association for Computing Machinery, 2021. doi:10.1145/3485279.3485287 Details BIB Download

- Saad, Alia; Liebers, Jonathan; Gruenefeld, Uwe; Alt, Florian; Schneegass, Stefan: Understanding Bystanders’ Tendency to Shoulder Surf Smartphones Using 360-Degree Videos in Virtual Reality. In: Proceedings of the 23rd International Conference on Mobile Human-Computer Interaction (MobileHCI). Association for Computing Machinery, Toulouse, France, 2021. doi:10.1145/3447526.3472058Kurzfassung Details BIB Download

Shoulder surfing is an omnipresent risk for smartphone users. However, investigating these attacks in the wild is difficult because of either privacy concerns, lack of consent, or the fact that asking for consent would influence people’s behavior (e.g., they could try to avoid looking at smartphones). Thus, we propose utilizing 360-degree videos in Virtual Reality (VR), recorded in staged real-life situations on public transport. Despite differences between perceiving videos in VR and experiencing real-world situations, we believe this approach to allow novel insights on observers’ tendency to shoulder surf another person’s phone authentication and interaction to be gained. By conducting a study (N=16), we demonstrate that a better understanding of shoulder surfers’ behavior can be obtained by analyzing gaze data during video watching and comparing it to post-hoc interview responses. On average, participants looked at the phone for about 11% of the time it was visible and could remember half of the applications used.

- Faltaous, Sarah; Gruenefeld, Uwe; Schneegass, Stefan: Towards a Universal Human-Computer Interaction Model for Multimodal Interactions. In: Proceedings of the Conference on Mensch Und Computer (MuC). Association for Computing Machinery, Ingolstadt, Germany, 2021, S. 59-63. doi:10.1145/3473856.3474008Kurzfassung Details BIB Download